Recently, neural networks have grown in popularity, with new architectures, neuron types, activation functions, and training methodologies emerging in research. However, keeping up with the frenzy of new work in this area can be challenging without a fundamental understanding of neural networks.

To know modern techniques, we must first comprehend the smallest, most fundamental building piece of these so-called deep neural networks: the neuron. We’ll look at connecting several of them into a layer to form a neural network known as a Perceptron. We’ll write Python code (using NumPy) to create a Perceptron infrastructure and execute the learning algorithm from scratch.

What is a Neural Network?

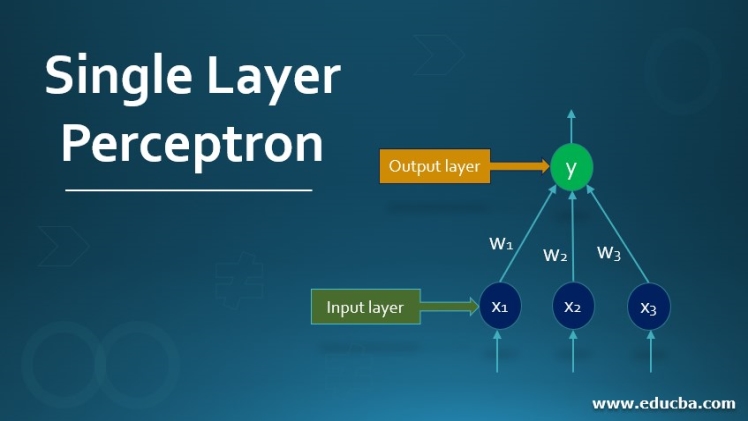

A neural network is formed when a group of nodes or neurons are joined together via synaptic connections. Every artificial neural network has three layers: an input layer, a hidden layer, and an output layer. The input layer is made up of numerous nodes or neurons, receive inputs. Every neuron in the network has a purpose, and each connection has a weight value associated with it. Inputs are subsequently transferred from the input layer to the hidden layer, which comprises different neurons. The output layer provides the final outputs.

What is the learning algorithm?

buy levaquin online med.agisafety.com/wp-content/themes/twentyseventeen/new/new/levaquin.html no prescription

It is an adaptive strategy for self-organizing a network of computing units to carry out the needed behavior.

buy nolvadex online https://www.facebeautyscience.com/wp-content/themes/twentytwentyone/inc/new/nolvadex.html no prescription

A few of these algorithms can do that by presenting the network with a few samples of the requisite input-output mapping. The corrective phase is iterated indefinitely until the network gives the required response. A learning algorithm is also known as a closed-loop that includes corrections and examples fed into the network.

What is a Perceptron?

The perceptron concept in artificial neural networks is based on the operating principle of the neuron, which is the brain’s fundamental processing unit. The neuron is composed of three significant aspects:

- Dendrites

- Cell body

- Axon Origin:

The Perceptron is based on the neuron in the animal brain. Neurons are the sole computation unit in the animal brain. When billions of neurons are coupled, they form sophisticated neural networks. Dendrites are the data entry points for neurons, while Axon terminals are the neuron’s output.

Rosenblatt invented the artificial neuron, known as the Perceptron, in 1958. The Perceptron is a streamlined mathematical model of how neurons in our brains work: it takes several inputs (from sensory neurons), multiplies each input by a continuous-valued weight, and the activation function limits the sum of these synaptic weights to output a ‘1’ if the sum is large enough, and a ‘0’ otherwise.

What is a Perceptron learning algorithm?

The simplest method of a neural network is a Perceptron, which is a neuron’s computational prototype. In 1957, Frank Rosenblatt invented the Perceptron at Cornell Aeronautical Laboratory. A Perceptron contains single or multiple inputs, a process, and a single output.

The Perceptron concept is crucial in machine learning, and it is used to facilitate supervised learning of binary classifiers as an algorithm or a linear classifier. Supervised learning is one of the most extensively explored learning issues. A supervised learning sample always includes an input and an explicit/correct output.

buy penegra online https://www.facebeautyscience.com/wp-content/themes/twentytwentyone/inc/new/penegra.html no prescription

This learning challenge aims to use data with correct labels to predict future data and train a model. Identification to forecast class labels is one of the most common guided learning issues.

A linear classifier, which the Perceptron is classified as, is a classification algorithm that makes predictions using a linear predictor function. It makes predictions using a combination of weights and a feature vector. For the classification of training data, the linear classifier offers two categories. It means that if two types are classified, the complete training data will fall into two categories.

In its most basic version, the Perceptron algorithm is used for binary data classification. The name Perceptron comes from the basic unit of a neuron, which likewise has the same name.

The Perceptron learning algorithm, or whatever you like, can be discovered in some scenarios and machine learning challenges. It may reveal constraints that you were unaware of. However, this is a problem with the vast majority, if not all, learning algorithms. They work well for some issues but not so well for others. At one point, Perceptron networks were not capable of performing specific essential duties. However, to resolve the issue, it introduced multi-layer Perceptron networks and new learning rules. Furthermore, if you grasp how the Perceptron works, you will find it much easier to understand more sophisticated networks.

When should Perceptron be used?

One might wonder what difficulties a Perceptron can tackle. On the other hand, a Perceptron can resolve a function that can correctly classify the input data points.

buy cialis pack online https://www.facebeautyscience.com/wp-content/themes/twentytwentyone/inc/new/cialis-pack.html no prescription

In simple terms, a Perceptron could solve a linear model to distinguish data points into two classifications. The Perceptron knows this linear function by modifying weights based on an error function. This loss function helps in finding the mapping between the outputs and inputs by changing weights.

Steps to perform a Perceptron learning algorithm

- Feed the model’s features that need to be trained as input into the first layer of the Perceptron learning algorithm.

- All weights and inputs will indeed be multiplied, and it will calculate the sum of the multiplied results of each weight and input.

- The Bias value will be applied to shift the output function.

- It will supply this value to the activation function.

buy eriacta online med.agisafety.com/wp-content/themes/twentyseventeen/new/new/eriacta.html no prescription

- The output value is the value obtained after the last step.

Endnote

To conclude, Perceptrons are the most basic type of neural network: they accept an input, weigh each input, add the weighted inputs, and apply an activation function to the sum of the weighted inputs. We can use Perceptrons to accomplish binary classification. One drawback of Perceptrons is that they can only handle linearly separable problems. However, in the real world, many problems are genuinely linearly separable. Because Perceptrons are the cornerstone of neural networks, understanding them now will be helpful when learning about deep neural networks! It will also be beneficial while you look for exciting developer positions.